Run Experiments Anywhere

With Minimal Effort

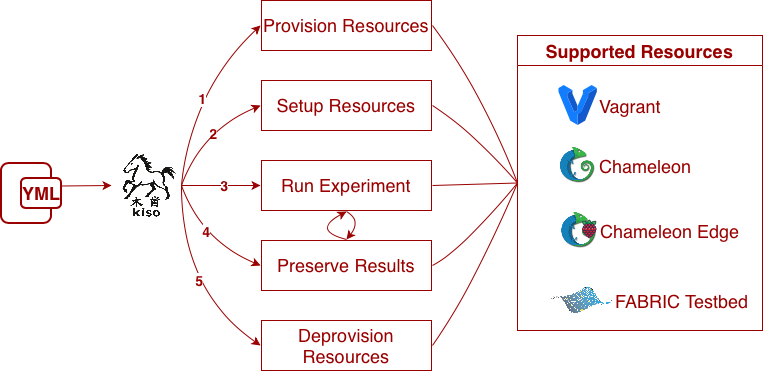

Kiso helps researchers run and reproduce experiments across edge, cloud, and testbed environments, like FABRIC, Chameleon, etc. Define your experiments declaratively, and let Kiso handle the infrastructure complexity.

- Running workflow experiments across testbeds with builtin support for Pegasus.

- Running agentic experiments.

What does Kiso do?

Kiso in action

# Install Kiso

$ pip install kiso[all]

# Define your experiment in YAML

$ vim experiment.yml

# Provision resources across multiple testbeds

$ kiso up

# Run across multiple testbeds

$ kiso run

# Deprovision resources

$ kiso down